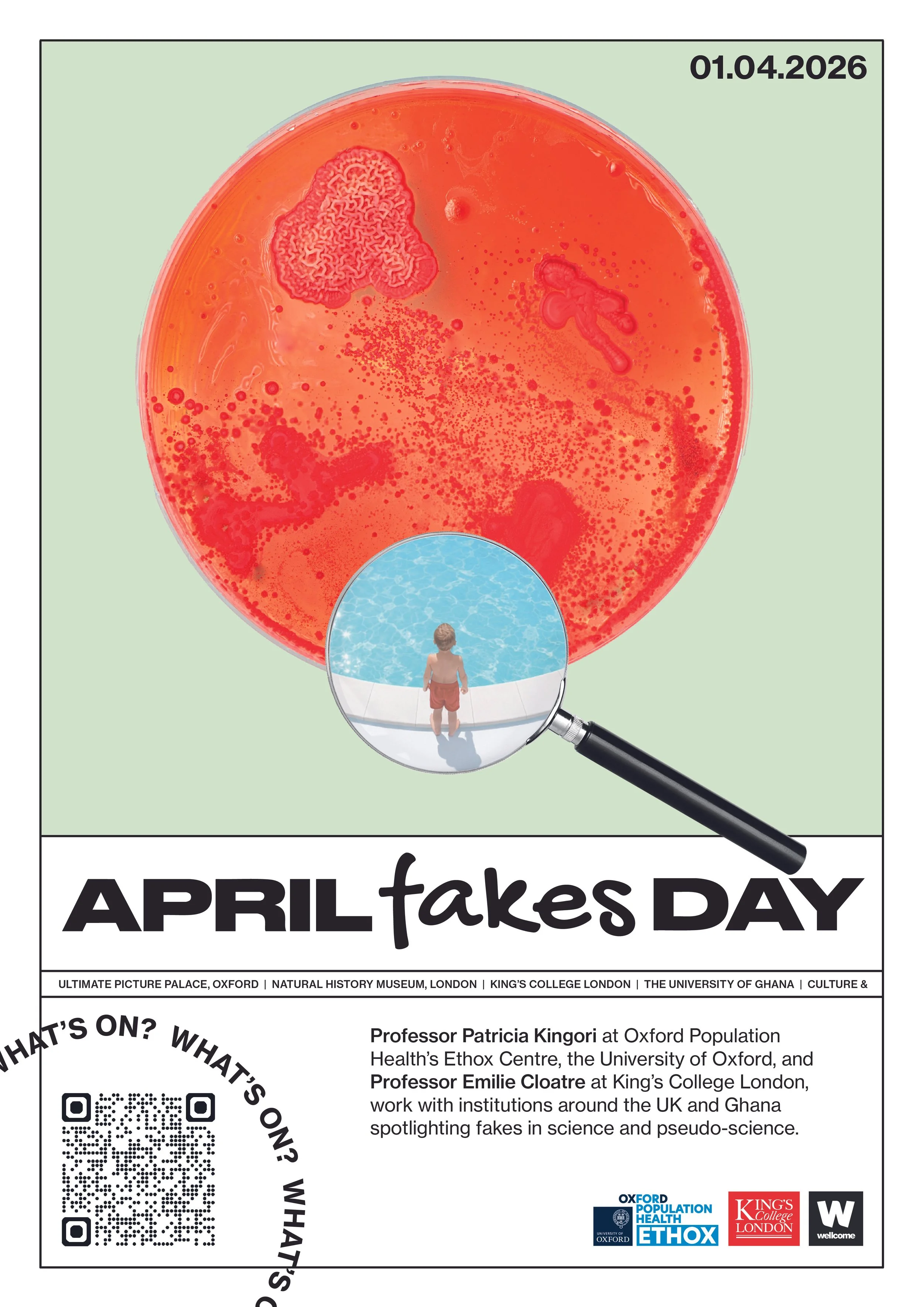

This year’s April Fakes Day focuses on questioning ideas of fakery in science.

Once again, we explore the blurriness between real and fake in fun and engaging ways.

In collaboration with Professor Patricia Kingori at Oxford Population Health’s Ethox Centre, the University of Oxford, and Professor Emilie Cloatre at King’s College London, institutions around the UK and Ghana will spotlight fakes in science and pseudo-science.

April Fakes Day serves as an opportunity to engage with the complexities of fakery and its role in shaping our understanding of reality. We engage with collections, research and the stories we tell to celebrate curiosity, creativity and critical thinking.

For information on how Between Deception and Dissent approaches contested or fake health-claims, and the law’s role in responding to those, listen to the podcast Research in Action.

For more information of this year’s April Fakes Day, listen to the Nature’s podcast where Patricia Kingori explains more about the event.

Previous April Fakes Day events can be found here.

-

Ultimate Picture Palace, Jeune Street, Oxford, OX4 1BN

Wednesday April 1th 2026 @ 6PM

Join us for a special screening of ‘Safe’, followed by a Q&A led by Professor Patricia Kingori (curator of April Fakes Day) and Professor Caitjan Gainty (Kings College London) who has worked extensively on the history of medical therapeutics and alternative & complementary therapies.

Safe is a quietly disturbing film that can be read in many ways: as a critique of self-help culture, a metaphor for the AIDS crisis, a drama about class and social isolation, and even a form of horror about the invisible dangers that surround us. We follow California housewife Carol as she seeks answers for her mystery illness, turning to self-help and alternative treatments.

-

The Natural History Museum reflect on The Platypus - nature’s fake fake

When the first platypus specimens arrived in Europe in the late 18th century, scientists didn’t quite know what to make of them. Here was a creature that seemed to have the bill of a duck, the tail of a beaver, and the fur and body of an otter. It seemed so confusing, so stitched-together, that there was a suspicion of foul play. Surely someone had glued a duck’s beak onto a mammal’s body?

As it turned out, the platypus wasn’t a hoax at all. Instead, it became one of the most admired examples of how wonderful the natural world can be, a fake fake, once dismissed as a forgery, now celebrated as a marvel.

From Suspected Hoax to Scientific Wonder

The ventral view of Ornithorhynchus anatinus type specimen

In the early 1800s, European scientists received sketches, drawings and skins of this strange Australian creature. Their first instinct was that this must be a fraud. At the time, the world was full of fabricated oddities such as P.T. Barnum’s famous Fiji Mermaid. However, the platypus soon proved to be something far more interesting than another stitched-together sideshow attraction.

George Shaw was appointed to the natural history section of the British Museum in 1791, and in 1807 became keeper, a position he retained until his death on 22 July 1813. Shaw became the first to scientifically describe the animal in 1799. Even he reportedly tried to find the seams. He wrote ‘I almost doubt the testimony of my own eyes with respect to the structure of this animal’s beak.’ Yet every examination confirmed the truth: the platypus was real, and baffling to scientists of the day. Shaw named the animal Platypus anatinus. Platypus meaning ‘flat-footed’ and anatinus, as the Latin for duck-like which gives us the common name we use today: duck-billed platypus.

Unfortunately, the Platypus part of the original scientific name did not stick for very long because another scientist had already used that name for a group of beetles. A few years later the scientific name was changed to the one we still use today, Ornithorhynchus anatinus. Despite of how different the word Ornithorhynchus is, it is still quite appropriate as it means “bird-snout”.

Of course the First Nations peoples had different names for this species such as tambreet, mallunggang, billadurong and tohunbuck among others and those predate any names given to the species by Europeans.

The specimen that George Shaw examined became the type specimen for the species. A type specimen is the specimen used as the basis for the description of a species. Type specimens are reference specimens that scientists around the world study to identify and classify new species. Its images and data are freely available on the Natural History Museum’s Data Portal alongside over 6 million specimens records that have been also digitised.

The Animal That Challenged the Rules

What made the platypus seem fake back then still makes it fascinating today. The creature’s ducklike bill serves as a highly sophisticated sensory organ, its tail and its webbed, clawed feet are ideal for both swimming and burrowing. Another unexpected feature discovered later on is that it lays eggs like birds or reptiles, but it is still considered a mammal as it feeds its young with milk.

Uniquely, males possess spurs connected to venom glands on their hind legs, so they are capable of envenomation, a rare trait among mammals.

Before the platypus, mammal classification seemed relatively simple, but this mix of features really challenged the scientific understanding of the time. Only much later did a fuller understanding emerge; nature doesn’t always comply with the neat categories we create. Sometimes, naturethrows something that defies all expectations, something so different that even an expert, first assumes it was fake.

It was eventually placed along with echidnas in a brand-new order, Monotremata, the egg-laying mammals. Monotremes are an early branch of the mammal family tree, having split from placental mammals and marsupials more than 160 million years ago. The modern species has been in Australia for only about 5 million years. This is quite remarkable as it still beats our species by a few million years.

A Reminder That Nature Loves to Surprise Us

The platypus stands as a delightful example of science being humbled by nature. It fooled early observers not because it was artificial, but because it was real in a way they had never imagined.

Explore the Platypus online as part of the Natural History Museum’s Digital Collection and follow @NHM_Science on Instagram for more stories about our collections.

-

Fakes in science activity download by Lucas Canino, an artist in residence at the Healthy Scepticism project.

Test your skills with ‘Spot the difference,' ‘Connect the dots’ and other interactive games and puzzles designed to highlight how scientific evidence and authority can be (mis)interpreted, manipulated & hallucinated.

-

Errol Francis from Culture& writes on one of history's most persistent examples of fake science, race science.

Read blog here

-

War for the Ashanti Golden Stool: History, Resistance, and the Making of a Myth

Long before the British marched into Kumasi with rifles and imperial certainty, the Ashanti already possessed something far more enduring than military power. At the centre of their world was not a throne, nor a crown, but a stool – small in form, immeasurable in meaning. The Golden Stool, Sika Dwa Kofi, was not crafted merely as an object of kingship. It was believed to have descended from the sky, summoned by the priest Okomfo Anokye and received by Osei Tutu, the first Asantehene. In that moment, the Ashanti were not simply unified politically; they were bound spiritually.

The stool was said to hold the sunsum, the collective soul of the Ashanti people. It was never to be sat upon not even by the king. Instead, the king ruled in relation to it, deriving legitimacy from its presence. To possess the Golden Stool was not to hold power; it was to safeguard the very essence of a nation. And to lose it would not merely signal defeat, it would mean dissolution.

This was something the British Empire did not understand.

By the end of the nineteenth century, British authority had extended deep into the Gold Coast. In 1896, they exiled Prempeh I, the Asantehene, believing they had effectively subdued Ashanti resistance. But they had not reckoned with the Golden Stool. It remained beyond their reach, both physically and conceptually.

In 1900, Governor Frederick Hodgson arrived in Kumasi and made a demand that would ignite a war. Standing before Ashanti chiefs, he asked, with imperial bluntness: “Where is the Golden Stool? Why am I not sitting on it?” To Hodgson, it was a symbol of authority to be claimed, as one might claim a palace or a flag. To the Ashanti, the demand was an affront of the highest order, a violation not only of sovereignty but of the sacred.

The chiefs hesitated. It was not fear alone, but the weight of what was at stake. Then Yaa Asantewaa, Queen Mother of Ejisu, rose to speak. Her words cut through hesitation and called for action. If the men would not fight, she declared, then the women would. Under her leadership, the Ashanti took up arms.

What followed was not merely a rebellion but a defence of metaphysical ground. The War of the Golden Stool began not with territory, but with meaning.

Even as the conflict unfolded, another decision had already been made, one far more consequential than any battlefield manoeuvre. The Golden Stool would be hidden.

Removed from its known location under conditions of strict secrecy, it was taken deep into the forest. Only a select few knew where it lay concealed. There were no maps, no written records, no careless whispers. In this act, the Ashanti did something strategically profound: they withdrew the object of conflict from the reach of imperial power altogether.

The British laid siege to Kumasi and eventually suppressed the uprising. Yaa Asantewaa was captured and exiled. The machinery of empire prevailed in military terms. But the Golden Stool remained unfound.

The British searched. They interrogated. They speculated. But they never held it.

Years passed. The war faded into the administrative routines of colonial rule. Then, in 1920, an unexpected event disturbed the fragile equilibrium. A group of labourers, working in the forest, stumbled upon something hidden, something gold. Not fully grasping its significance, they stripped parts of it, removing ornaments of value.

Word spread quickly. What had been concealed with utmost care had now been exposed by chance.

The reaction among the Ashanti was immediate and severe. This was not theft in the ordinary sense; it was desecration. The colonial authorities, perhaps now more aware of the stool’s importance than they had been two decades earlier, intervened. The individuals responsible were arrested and punished. Crucially, the British did not attempt to seize the stool for themselves. It was returned to Ashanti custodians and restored to its sacred status.

The soul of the Ashanti, though disturbed, had not been taken.

And yet, in the years that followed, particularly in popular retellings, another story began to circulate. It was a simpler story, neater in its resolution, and perhaps more satisfying to tell: that the Ashanti had deceived the British by presenting them with a fake Golden Stool.

In this version, cunning triumphs cleanly over power. The British, arrogant and unsuspecting, accept a replica while the real stool remains hidden. It is a story of clever resistance, of psychological victory compressed into a single act.

But there is a problem.

There is no evidence it happened.

Colonial records, often detailed, sometimes embarrassingly so, make no mention of such a deception. They do not describe acquiring a stool, real or fake. Instead, they consistently report frustration, absence, and failure. The British did not take the wrong stool. They took none at all.

So why does the story persist?

Part of the answer lies in narrative instinct. The historical reality is unresolved: a powerful empire demands a sacred object and never obtains it. There is no dramatic reveal, no moment of recognition. The stool simply remains beyond reach. For many, this feels incomplete, and so a story emerges to complete it.

Another part lies in misunderstanding. To an external observer, a stool may seem replicable, an object that can be copied in gold and form. But the Golden Stool is not defined by its material. Its authority derives from origin, ritual, and belief. A replica would not deceive in any meaningful sense, because it would not be the stool.

And yet, the persistence of the “fake stool” story reveals something important, not about Ashanti history, but about how history is told. It reflects a desire to frame resistance in terms of wit and trickery, rather than in terms of discipline, unity, and restraint.

Because the real story is, in some ways, more demanding.

The Ashanti did not win by deception. They did not outwit the British in a single clever exchange. They did something quieter and more difficult: they protected what mattered by refusing to expose it. They maintained secrecy under pressure. They sustained a shared understanding of the stool’s meaning across a fractured political landscape. They ensured that, even in defeat, something essential remained intact.

The Golden Stool was never handed over, neither in truth nor in disguise.

And so the enduring lesson is not that the British were fooled, but that they were denied. Not by illusion, but by conviction. The legacy of this event goes beyond the survival of a single object. It is a story of a people who understood that power is not only expressed through force, but through identity, unity, and belief.

The false story speaks of a clever trick.

The true story speaks of a people who understood that some things cannot be surrendered, not because they are guarded, but because they are lived. And that resistance can take many forms. Sometimes it is loud and visible. Other times, it is quiet, strategic, and just as effective.

In that sense, the Golden Stool was never truly hidden.

It remained exactly where it had always been: at the centre of the Ashanti world, beyond the reach of those who could not comprehend it.

Credit: Caesar Atuire, Dodzi Koku Hattoh, Agathine Asamoaning, Selma Yoyowah, and Eugene Ankamah, for curating this blog on the well-known story of the Fake Golden Stool, where what was deemed “fake” became a powerful instrument for preserving what was most real.

-

Fake Diseases: How We Got Here, How its Going.

Most who watch Penny Lane’s 2018 documentary The Pain of Others remember it for the bizarre nature of the disease it describes and the outrageous lengths to which its three protagonists go to gain relief from it. One woman shaves her head. Another drinks her own urine. Both are driven to these acts by the unrelenting symptoms of Morgellons: skin lesions that emit long colorful fibers; stinging, burning, and even crawling sensations just under the skin; chronic fatigue and more.

By and large, medicine has a different definition for Morgellons. On its website, the prestigious Mayo Clinic relocates the disease from the skin to the mind of its sufferers: it is a variant of something called “delusional parasitosis,” it says, which is described as the “belief” that creepy crawly things - parasites, of course, but also other unpleasant invaders - are on, in or under your skin, even though they are not. It advises “compassionate treatment” but firmly suggests that this ought to be of the psychiatric kind.

As a London psychiatrist explained to me, for this reason one past treatment plan for Morgellons sufferers was “treatment by subterfuge.” It is as it sounds. Dermatologists, to whom Morgellons patients were frequently referred, would treat these patients not with drugs from their own skin-focused armamentarium but with drugs borrowed from their colleagues in psychiatry. The patient would be left to presume that their symptoms had been taken seriously and that their skin was being treated by drugs necessary to allay these symptoms. They would not know that they had instead been prescribed drugs to treat their delusional belief that these symptoms were real. To practitioners, this was a harmless, even a good lie, was the rationale. And when these treatments were accompanied by a reduction in or cessation of symptoms, it was also a lie that confirmed what clinicians already presumed: Morgellons wasn’t a “real” disease.

Of course, this was hardly the “compassionate treatment” that the Mayo now recommends as the right approach to Morgellons. It is also not a treatment that works effectively in the age of the internet: a little googling on the part of the patient ruins the ruse. The result is not at all the desired effect from the medical side, and not only in the sense that the “delusional parasitosis” remains untreated. Quite obviously too such a tactic communicates to the patient what they had most feared: that they have not been taken seriously in their concerns; that they have been treated as though they were “crazy,” both by being treated with antipsychotics and by being lied to about that treatment; that their doctor and quite possibly the entire medical profession cannot be trusted, and so on. And in the meantime, their symptoms persist.

Doctors don’t want that. But the medical establishment has been largely immovable (with certain exceptions) in the view that this is a mental illness and not a physiologic one. And this makes the conundrum on the medical side one that focuses mostly on how to treat a disease that masquerades as a real entity but is not without resorting to deception, coercion or, god forbid, force.

On the patient side, the stakes are entirely different. For to be pawned off on to a psychiatrist is typically taken as a refutation: an indication that these symptoms do not reference a “real” disease. Yet, they are experiencing real symptoms that take place on the body and they demand their answers there: a biomarker, a pathological finding, a test, perhaps, that will prove the reality of their experience.

This is undoubtedly why the third protagonist in Penny Lane’s moving film looks for the solution to her symptoms in the purchase of an expensive microscope. This irrefutably scientific instrument, she thinks, will allow her to finally officially produce the evidence needed to prove to sceptical doctors once and for all that her Morgellons is real.

In this, she is unsuccessful, and it is easy to see why. For in the painful segment that chronicles her experiments with the microscope, one of the essential and governing problems that plagues those who suffer from so-called “fake” diseases is revealed: the language of symptoms, the experience of illness and suffering, the feelings of hopelessness, betrayal and defeat that accompany and define such conditions defy translation into the language of science. For what this experimenter-sufferer sees through her microscope are threads that form themselves into letters that seem to her to indicate not just the life force of a real pathogen but even perhaps an attempt at communication.

By great contrast, what scientists conventionally have seen when they have examined these strands is far more banal. Though occasionally one reads a paper that suggests that these strands are biofilaments, made up of the keratin and collagen that also make up our skin, still more frequently asserted is the view that these are strands of polyester, cotton, wool or some of the other bits that make up the lint and fluff of our lives.

For patients of diseases that still await some fixed biomarker or pathological sign to mark them out physiologically, Lane’s portrait of Morgellons (which she fittingly describes as an “act of radical empathy”) paints an all too familiar figure. Like a whole host of other rare, poorly understood or simply dismissed diseases or symptom complexes, sufferers find themselves caught in one of the quintessential binds of modern medicine. It is not enough to just feel ill. One’s body also has to provide evidence of that illness of a sort that is medically legible and meaningful. And ideally, that evidence needs to tell a story that writes it onto another story of disease that is already well-known. This gives a definitive diagnosis and hopefully a clear path to therapy as well. But flounder at any one of these points and you too could find yourself in the position of the microscope-toting Morgellons sufferer, searching for anything that will convince doctors to take you seriously.

This is a relatively new phenomenon. For centuries, symptoms formed the material core of medical therapy. It wasn’t that there was no knowledge or conception of disease per se. It was instead that the symptoms of the individual experiencing a disease mattered a lot, perhaps more, than the disease itself. My bout of plague might be broadly similar to your bout of plague in certain ways. But in other ways, because my body is not your body, it would also be different. Those differences were critical, and they would show up in our symptoms. And they mattered, not least to the kinds of treatments we each might be offered.

Scientific medicine shifted this view, especially over the late 19th and early 20th centuries. Preferring to think across bodies and their symptoms, rather than focusing on them each separately, this new iteration of medicine singled out pathogens as responsible for disease (and thus the focus of treatment). Symptoms still mattered, but more as signals: what they could say about disease became more important than what they said about the bodies on which illness occurred or the treatments that were therefore appropriate. My bout of plague was now also your bout of plague, as far as medicine was concerned.

What happened on and to the individual body in particular, then, lost pride of place to what could be deciphered about a set of common symptoms - and the disease they might suggest - across different bodies. Symptoms were the vehicles that gave voice to a diagnosis, either already known or in need of figuring out.

This was a far more efficient way to go about doing medicine. Rather than spending time and resources on each individual, medicine could produce a therapy that would address the enemy - the pathogen - common to all people with the same symptoms. And it was successful. Indeed, the rightness of this logic is often exemplified in terms of the miraculous effects of therapies like antibiotics. For when a bacterial enemy was properly identified and treated with the right antibiotic agent, a near-instant cessation of symptoms was the result. It didn’t matter one bit whose body it was or what their symptoms were. It only mattered that those symptoms came and went in accordance with the presence and absence of the bacteria.

Quite obviously, this left little space for symptoms on their own. In our current medical way of thinking, symptoms are a bit like pronouns without their referents. If they don’t point to disease, what, if anything, do they point to? And what do you do with them? Dismiss them out of hand until they reveal a real disease? Accuse those who have them of making them up? Relegate such patients to psychiatrists, as though these are the medical professionals to tend the scrap heap of presumed-fake-until-maybe-later-when-we-know-more diseases? In addition to the damage this does to those who are relegated there, it casts a weird pall on how we regard the reality of psychiatric illnesses more largely.

It’s easy to blame doctors or health care practitioners or healthcare systems for medicine’s logical failures. But these logics are also fully embedded in the fabric of our own understandings about health and its care. For decades, medical films and television programs have doubled down on the expectation that as soon as one sets foot onto medicine’s stage, diagnosis and treatment (with a soupçon of soapy melodrama and lashings of miraculous saves) are obviously on the menu, if not the only things available. Oh sure, there are the patients for whom nothing can be done, and those who are difficult to diagnose, but the former typically accept their fate, and the latter are by and large saved by a handsome doctor’s light bulb moment as he hears or sees something entirely unrelated and then makes theconnection. This diet of weekly narrative closure precludes the possibility of real life’s open-endedness, never mind the lingering persistence of unexplained, inscrutable and ever-evolving symptoms.

One would not want to be a patient on these shows. Whether patients live or die, suffer or thrive, is their business, best undertaken off-screen. Our business as viewers remains focused around the mystery of disease and the satisfying narrative crack when it gives way, yielding the clarity and specificity of certainty.

So much depends on achieving that certainty. For even with the best and most holistic of clinicians and the most understanding of family and friends, the fact remains that the system requires this diagnosis, even if we do not. The processing of symptoms into the legibility of diagnostic certainty matters bureaucratically - access to medical services, eligibility for sick leave, support for disability all depend on this designation. This helps to explain why so much activism around diseases that modern medicine has set aside as fake turns not on the conceptual limitations of our current system, but on the more urgent issue of getting these fake diseases registered as real. Though it means doubling down on the very system that has excluded them, it is also the singular pathway to our mainstream healthcare systems. As the renowned historian of medicine Charles Rosenberg put it nearly 25 years ago, diagnosis is so central to the modern care of health as to be positively tyrannical – it is the gatekeeper to our healthcare system, and it controls our passage through it. And it is also a critical source of how we parse illness at all, how we respond to the “pain of others.”

He’s still right, of course. Yet, awareness of illnesses that lack a solid disease identity is rising. Myalgic Encephalomyelitis (also known as Chronic Fatigue Syndrome), Long Lyme Disease and perhaps especially Long Covid among others have begun to force change in the way we approach such entities, occasioning what an eminent American psychiatrist described to me as a kind of “therapeutic surrender” that restores to symptoms and narratives of illness experience at least a seat at the therapeutic table. And with this, healthcare practitioners have become more attuned to medicine’s bigger picture: self-aware about what medicine can but also cannot do and what its structuring makes but also unmakes as possibilities in the clinic.

Caitjan Gainty is an award-winning writer and historian at King’s College, London. Her new book Healthy Scepticism: Tales of Doubt, Dissent and Distrust in Medicine is out 18 June from Hurst Publishers, available to pre-order here.

-

The Ridiculousness of Race Science: From Transatlantic Slavery to Museum Labels : Zandra Yeaman, Curator of Discomfort, The Hunterian University of Glasgow.

Race science is one of history’s strangest intellectual performances: a group of people determined to prove a conclusion they already believed, armed with rulers, skulls, and an impressive amount of confidence.

From the eighteenth through the nineteenth centuries, European and American scholars attempted to classify humanity into biological ‘races.’ Charts were drawn. Measurements were taken. Latin words were added. It all looked extremely serious.

The trouble was that the ‘science’ worked backwards. The hierarchy came first, and the evidence was asked, very politely, to cooperate.

Despite its dubious foundations, race science proved remarkably useful. It helped justify systems of exploitation, including chattel slavery, and its influence still lingers in institutions such as museums.

When Pseudoscience Met Chattel Slavery

During the era of the Transatlantic Slave Trade, defenders of chattel slavery began upgrading their arguments.

Earlier justifications had relied on religion or economics. By the nineteenth century, however, a more modern approach emerged: science. Instead of saying, ‘we want cheap labour,’ the argument became, ‘science shows that Africans are naturally suited to labour’.

This had a convenient advantage. It transformed slavery from a moral choice into a biological inevitability.

One of the most enthusiastic contributors to this effort was Samuel George Morton, who collected hundreds of human skulls and measured their internal volume. His conclusion that Europeans had the largest brains and Africans the smallest aligned perfectly with the social hierarchy of his time. A remarkable coincidence.

Another figure, Josiah C. Nott, took things further by arguing that human ‘races’ might be separate species altogether. This was a helpful idea if one wished to resolve the moral discomfort of slavery. Mainly by redefining the people being enslaved.

The ‘Science’ Behind the Claims

If race science had been accurate, it would still raise serious ethical questions. Unfortunately, it was not even particularly good science and had no basis on reality.

Skulls were measured inconsistently. Samples were biased. Data that contradicted expectations had an unfortunate tendency to disappear. The process had all the outward signs of scientific rigor, but the reliability of a horoscope.

Later scholars revisited these studies. Among them was Stephen Jay Gould, who argued that Morton’s work reflected unconscious bias in measurement and interpretation. While historians continue to debate the details, the broader lesson remains. Scientific methods are not immune to human assumptions, especially when those assumptions are doing the work to support the biases.

In other words, the numbers were presented as objective. The process that produced them, not so as the legacy is racism, prejudice and discrimination.

Museums and the Afterlife of Race Science

The era that produced race science was also busy building museums.

University museums such as The Hunterian based at University of Glasgow and Institutions such as the British Museum, the Smithsonian Institution, and the Musée de l’Homme assembled vast collections of artifacts, human remains, and cultural objects often acquired through colonial networks that were, at best, ethically flexible.

These collections were then carefully organised according to categories that reflected the same worldview as race science.

European objects were sorted into neat historical narratives: Renaissance, Baroque and Classical chapters in a story of progress.

Objects from Africa, Oceania, and the Americas, meanwhile, were frequently grouped under headings like ‘tribal,’ ‘primitive,’ or ‘ethnographic.’ Still a structure used today, however not in the Art collections.

The message was subtle but unmistakable. Europeans had history and art. Everyone else had… anthropology.

When People Became ‘Specimens’

If the labelling of objects was questionable, the treatment of people was far worse.

During the nineteenth century, human remains were collected as ‘racial specimens’. Skulls and skeletons were taken from burial sites or from colonised populations, often without consent and usually without much concern.

One of the most disturbing cases is that of Saartjie Baartman. Brought to Europe in the early 1800s, she was exhibited as a curiosity. After her death, her body was dissected and displayed for over a century at the Musée de l’Homme.

She was finally returned to South Africa in 2002, which is a long time to wait for basic human dignity.

Her story illustrates how easily scientific curiosity can become dehumanisation. Particularly when the subjects of study are denied a voice in how they are represented.

Why the Legacy Still Matters

At this point, it may be tempting to laugh at race science and, to be fair, it often invites it. Measuring intelligence by skull size now sounds about as credible as determining personality from tea leaves.

But the consequences were not funny.

Race science helped justify slavery, colonial rule, segregation, and discriminatory laws. Even after the theories collapsed, the systems they supported proved remarkably durable. The hierarchical systems and structures in institutions that we navigate everyday are a testament to this.

Modern genetics tells a very different story. Humans are approximately 99.9 percent genetically identical. Variation exists, but it does not align neatly with traditional racial categories.

Those categories were social inventions. Very influential ones.

Rethinking the Museum

Museums today are increasingly confronting this history.

Questions are being asked that might have seemed unnecessary a century ago:

Who created these categories?

How were these objects acquired?

Who gets to interpret them now?

For example, at The Hunterian we are working towards repatriation of human remains and cultural artifacts. We are rethinking how collections are labelled and displayed.

It is, in many ways, an attempt to turn the museum from a cabinet of certainty into a space of conversation.

Picture of Leaders of the Khoi and San communities paying respects to their ancestors during a repatriation ceremony at the University of Glasgow. Image © Martin Shields.

The Real Lesson

Race science is easy to mock, and in many respects, it deserves it. There is something inherently absurd about elaborate measurements designed to confirm what their authors already believed.

But its real significance lies in how effective it was.

It shows that scientific language can be used not only to discover knowledge, but to justify existing power structures with graphs.

The challenge today is not just to recognise the absurdity of past ideas, but to remain alert to similar patterns in the present such as moments when conclusions arrive first and evidence is invited in afterward.

The measuring tapes may be gone. The confidence, unfortunately, is still available.